TLDR | Agentic Data Stack (2026)

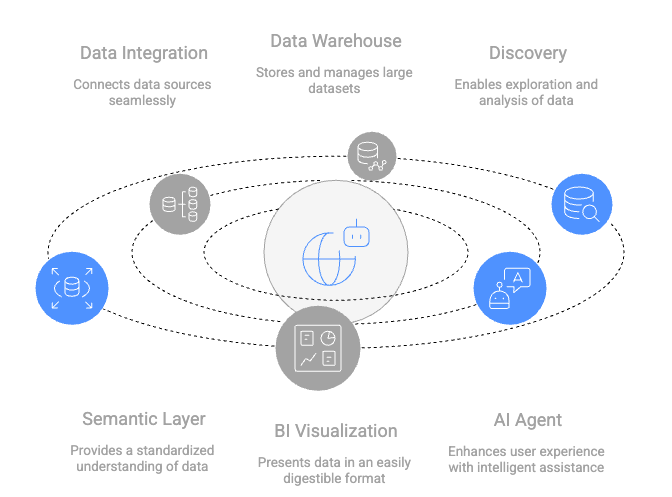

From Dashboards to Workers: In 2026, the focus has shifted from delivering dashboards to powering AI agents. For an AI agent to take reliable actions, it requires more than just raw data; it needs a “Map of the Business.”

Let’s explore the data and AI stack required to move from passive assistance to autonomous Agentic Workflows - where AI can safely navigate, reason, and execute tasks using business logic.

Highlights:

From Manual to Standardized Logic: Model Context Protocol (MCP) as the universal “handshake” between AI agents and data systems.

Unified Semantic Layer: dbt Fusion engine to define metrics once, ensuring the AI and the CFO are always looking at the same numbers.

Active Governance: With the rise of autonomous AI risks, prioritize automated audit trails and real-time data quality signals.

In B2B, we need to ensure Data Warehouse (DW) and Business Intelligence (BI) speak the same language. Without it, our sales team and finance team see two different “metric” numbers for revenue.

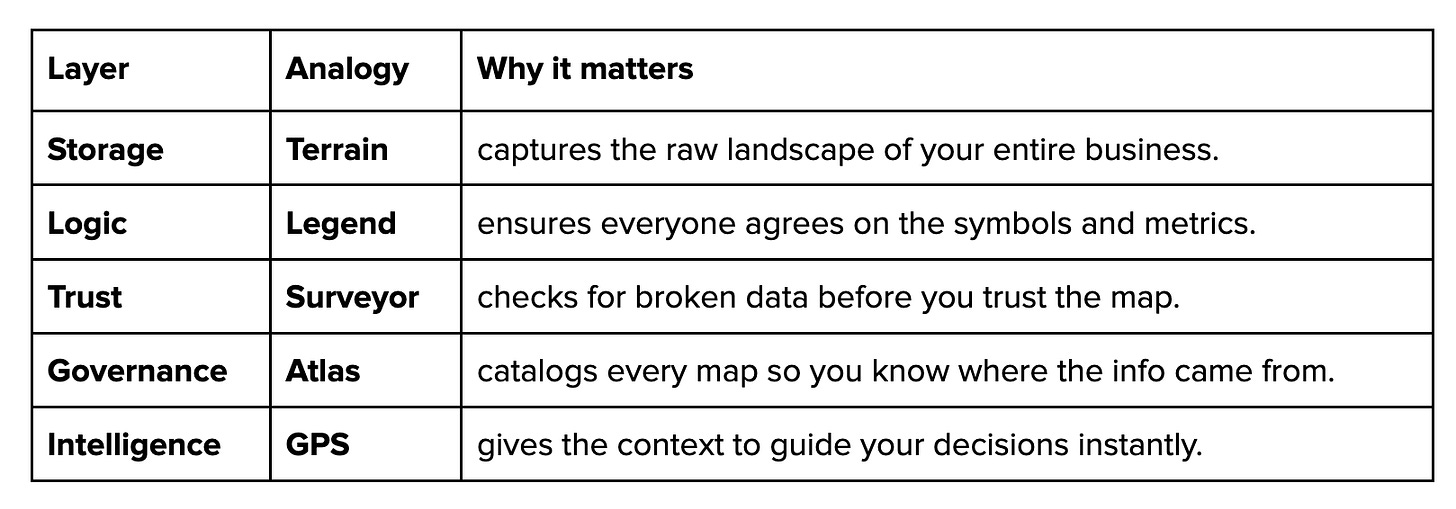

Data Map for Reliable AI

To build AI that actually works, we need a map that everyone—both humans and AI agents—can trust. Without it, your “GPS” leads you to the wrong conclusions.

Map Legend: One Version of the Truth

To ensure the map is readable, we have a Unified Semantic Layer and Master Data Management. Think of this as the “Map Legend”—it’s the single set of definitions that translates complex terrain into simple, agreed-upon terms.

Whether a human is looking at a dashboard or an AI agent is running a query, they both use the same “Instruction Manual” to define metrics like revenue. This eliminates the “two different numbers” problem and provides the Single Source of Truth needed for autonomous AI.

Trust across the data and AI stack

To build a trusted data and AI stack, we need a system where business logic is centralized so that “revenue” means the same thing to an AI agent as it does to the business and the BI analyst.

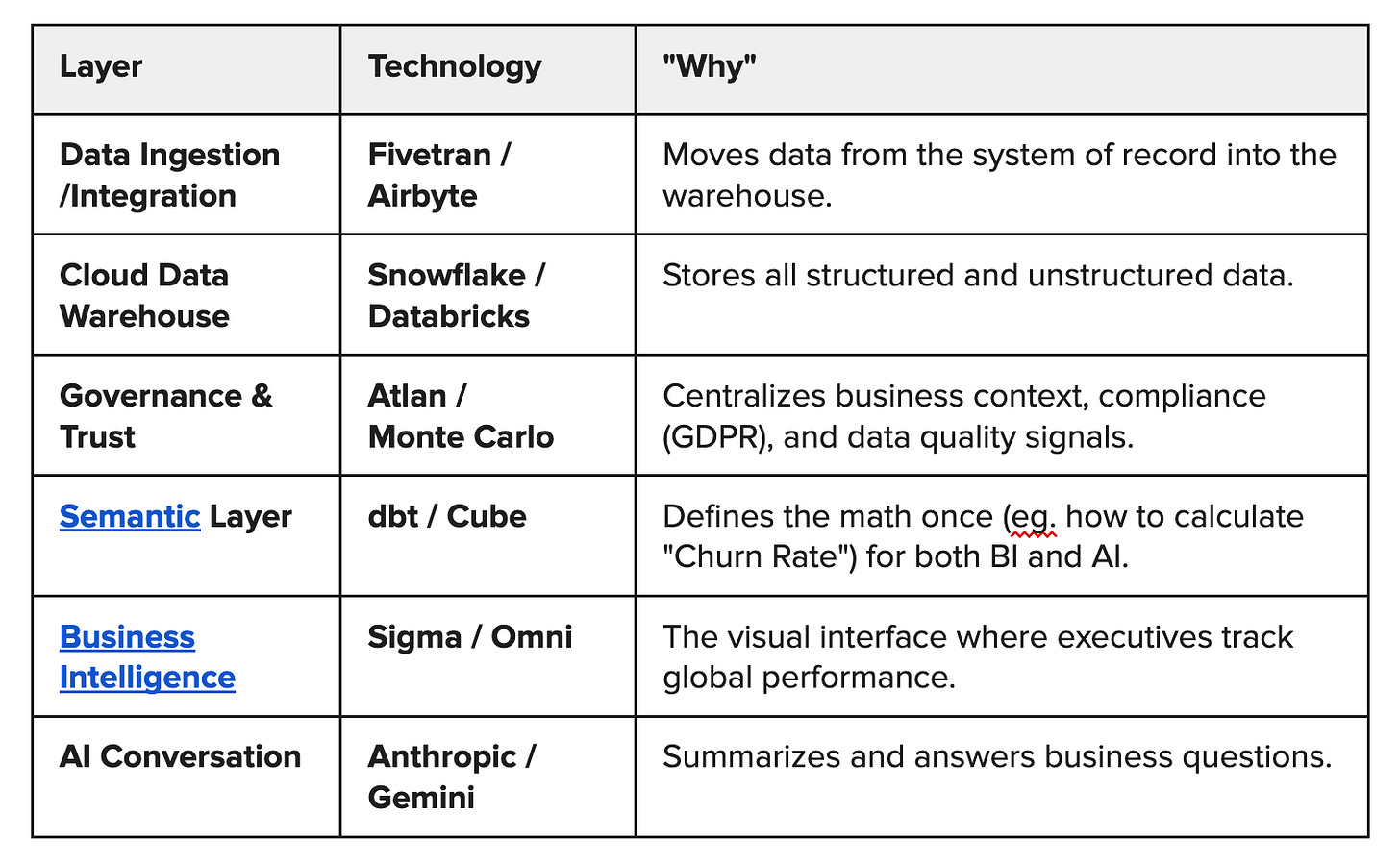

1. Unified Semantic Layer (”Single Truth”)

For a company at scale, the most critical is a Universal Semantic Layer. This decouples business logic from your BI tools and your AI models.

If you update a churn definition, you change it once in the semantic layer. Every dashboard and every AI prompt response updates automatically.

2. Cloud Data Warehouse (”Storage”)

Customer handles massive volumes of real-time and batch data. A cloud-native warehouse is essential for separating storage from compute.

3. Governance & Trust (”Guardrails”)

In B2B, trust is built on security and compliance (GDPR, SOC2). Your stack should have Data Observability to ensure data quality.

Data Ingestion / Integration

In 2026, integration has shifted from “Batch” (nightly updates) to Agentic & Event-Driven.

Where it sits: Between your source apps (Salesforce, Jira) and your Data Warehouse.

Model Context Protocol (MCP) allows AI to “reach out” and grab data from other apps (like a Jira ticket or a calendar) in real-time. MCP is now the universal standard that allows AI agents to “read the map” (the semantic layer) securely.

Fivetran/Airbyte: For moving structured data into your warehouse (Snowflake/BigQuery).

Streaming (Kafka): For real-time context (eg., “A user started a webinar, notify the sales rep now”).

Business Intelligence

BI tools are evolving into Warehouse-Native platforms. Instead of moving data into the BI tool, the BI tool lives on top of the warehouse.

Where it sits: On top of the Semantic Layer. It is the interface where humans verify what the AI is seeing.

Sigma or Omni are replacing older tools because they don’t require “data extracts.” They query the live data warehouse directly.

Industry Shift: There is an Open Semantic Interchange (OSI) standard supported by Snowflake, Salesforce, and dbt Labs to ensure these definitions work across all tools.

2026 has seen a surge in security crises regarding exposed AI context servers.

Our “Trust/Surveyor” layer is more relevant than ever.

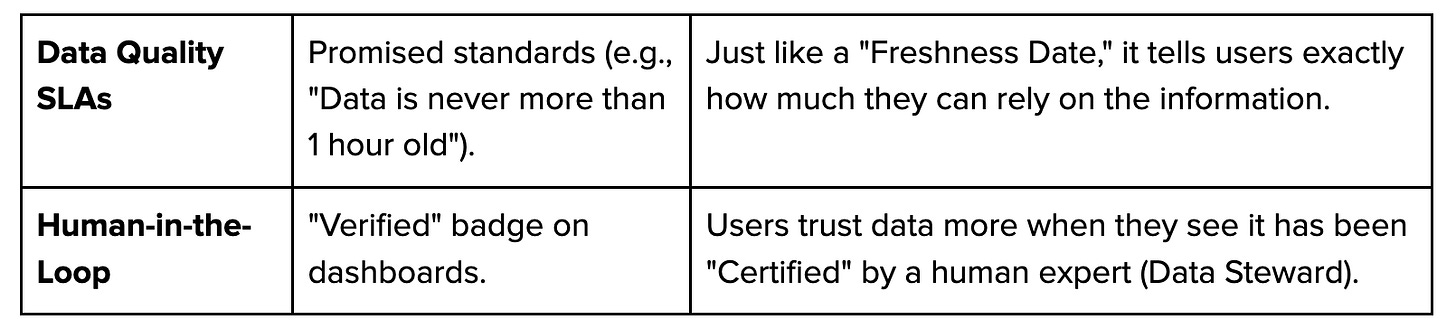

Trust Infrastructure Checklist

To build a data and AI stack people actually rely on, look for these elements:

2026 Readiness Checklist

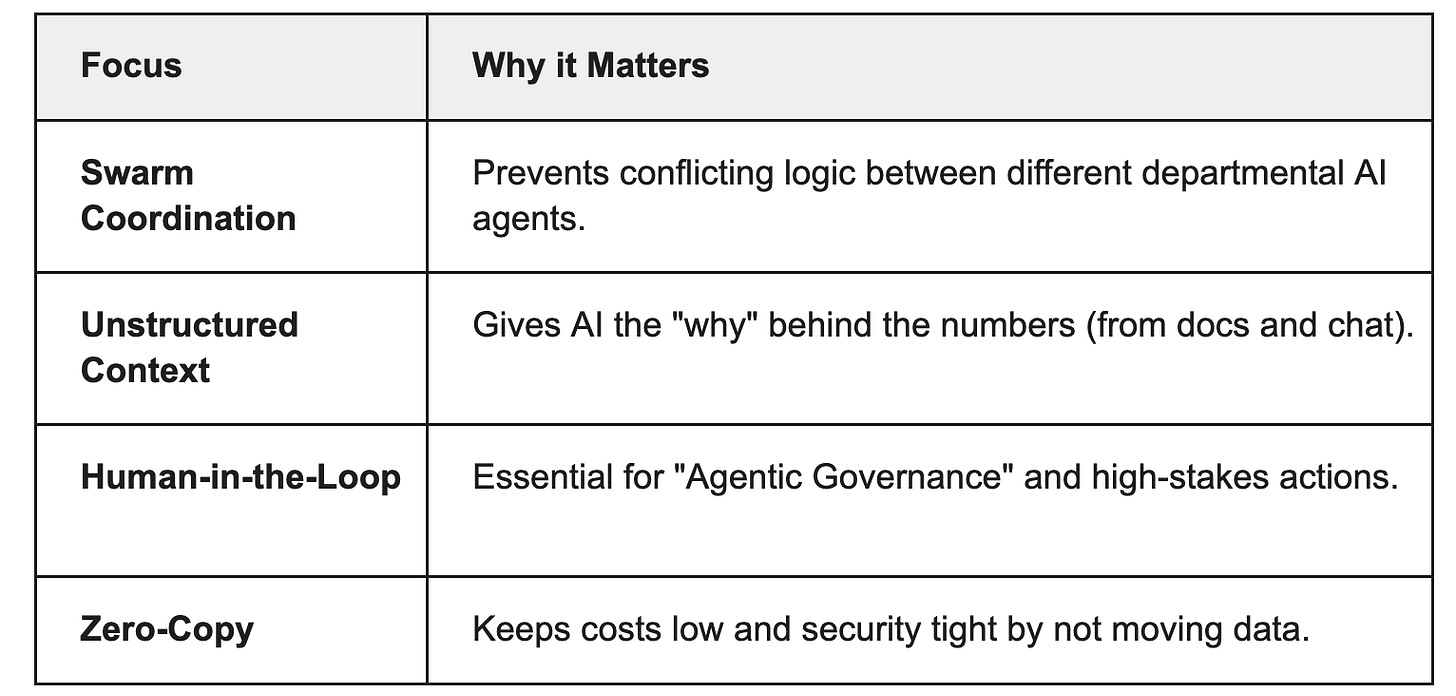

To move from “AI as a pilot” to “AI as a teammate,” your stack needs to address these critical gaps that have defined the data landscape in 2026:

Multi-Agent Coordination (The “Swarm”): Modern work is rarely handled by one AI. You have a “Finance Agent” and an “Inventory Agent.” Without a shared Semantic Layer, these agents will give conflicting answers. Coordination ensures all speak the same language.

Unstructured Data Integration: Most business “Logic” lives in PDFs, emails, and Slack, not just SQL tables. A data and AI stack pulls this unstructured context into the same framework so agents can “read” the company handbook as easily as they read a revenue report.

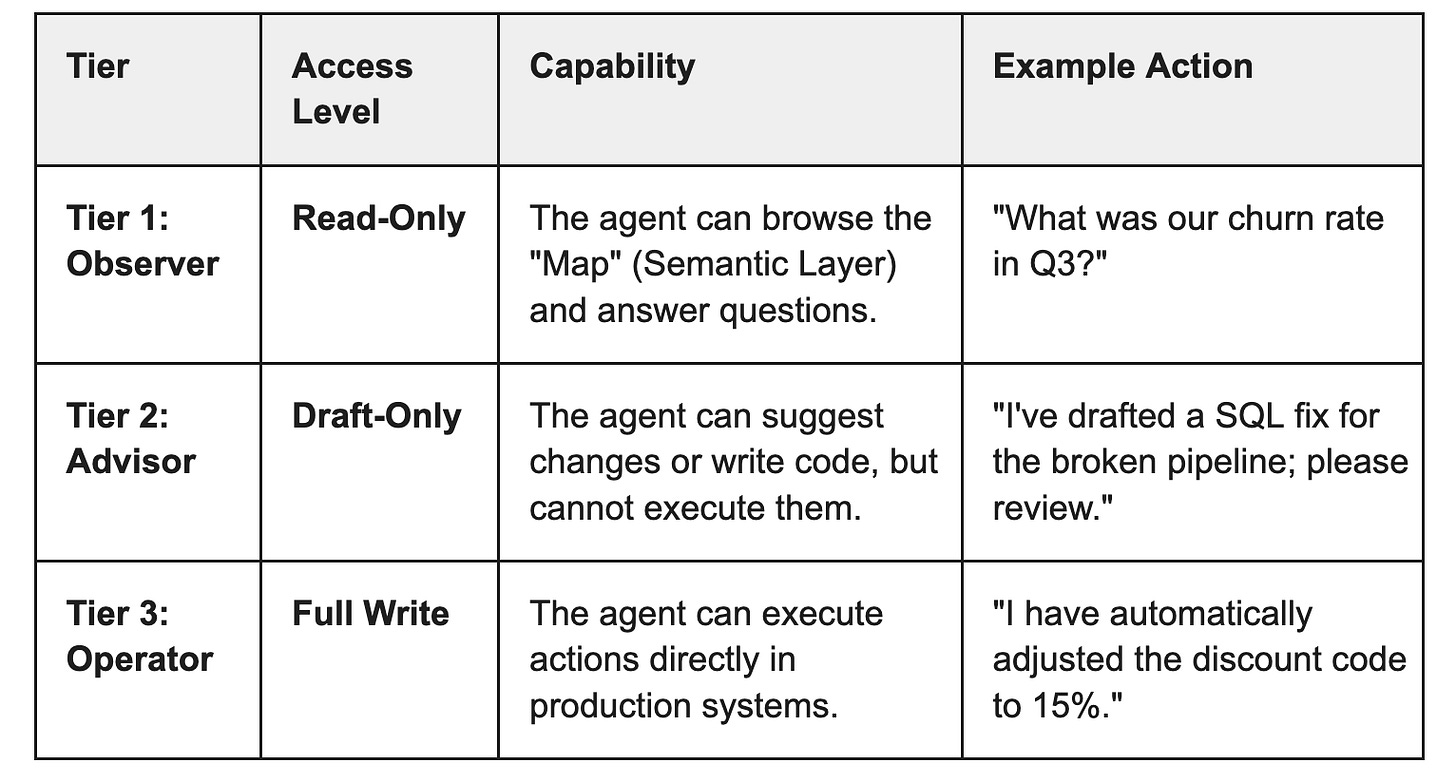

Kill Switch (Governance): Trust requires a safety valve. With Graduated Authority Models, agents can “Read” and “Analyze” freely, but require human-in-the-loop “Write” permissions for high-stakes actions like processing payments or adjusting prices.

Zero-Copy Architectures: To avoid the “data shockwave” of spiraling storage costs and security risks, data stays in the warehouse. Zero-Copy tools bring the AI to the data, rather than moving sensitive info into third-party AI silos.

3 Tiers of Authority

Conclusion:

In the Agentic era, the data stack is no longer just a reporting tool; it is the Operating System for AI. By focusing on a “Legend” (Semantic Layer) and a “Surveyor” (Governance), we aren’t just building better charts: we are building an autonomous organization that we can actually trust.